The $144m Mistake – Why Verification Must Always Come First

The Coalition of Cyber Investigators present a high-profile case study illustrating why verification must always come first before any investigative report is published.

Paul Wright & Neal Ysart

3/11/20269 min read

The $144m Mistake – Why Verification Must Always Come First

Introduction

The integrity of investigative reporting - particularly where financial allegations intersect with public figures - depends on disciplined verification, corroboration, and evidential accounting. In February 2026, a publicly accessible online narrative claimed that approximately $2.1 billion in financial activity linked to Jeffrey Epstein had been audited. It quickly attracted a lot of online attention, being reposted on platforms such as X, Reddit and even business-focused platforms such as LinkedIn. The article (Version One) presented structured tables, monetary volumes, transaction counts, and implied verified fund flows.

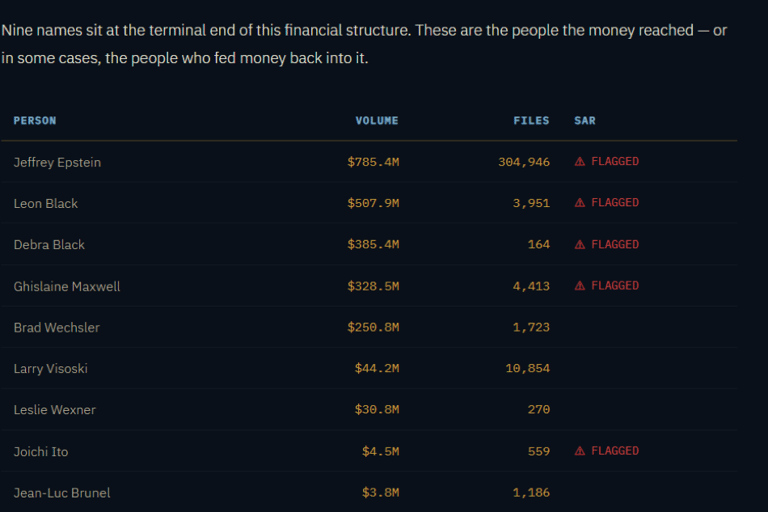

However, what really captured readers' attention was the key persons table, which incorrectly included the names of Donald Trump, Bill Clinton, and Prince Andrew and attributed transactions totalling $64.7M, $17.6M, and $11.9M to them, respectively. Within hours, however, significant revisions were made, and these three names disappeared from the table entirely (Version Two).

In short, the Natural Language Processing (NLP) model used to collect the data appeared to have treated names and dollar amounts appearing near each other in text as if they were financial transactions, even when they came from news articles or legal filings. Those low‑confidence extractions were aggregated and published before being verified against actual bank documents, so under proper review, the claimed totals collapsed. Eight individuals with a combined attributed total of over $144M, were subject to subsequent clarification which showed their totals came from NLP name-and-dollar co-occurrences in the document corpus, not from bank records.

The sequence of publication, archival capture, repository modification, and narrative reframing provides a case study in how incomplete verification - whether intentional or inadvertent - can contribute to the generation and propagation of misinformation, and in certain circumstances, disinformation

Publication and Archival Record

Preservation of Online Content and Archived Instances

Version One of the webpages claimed that USD $2.1 billion in financial records connected to Jeffrey Epstein had been audited and that individuals involved had been identified in the analysis. The page formed part of the “Epstein Forensic Finance” repository and contained narrative material asserting conclusions regarding financial transactions and associated individuals.

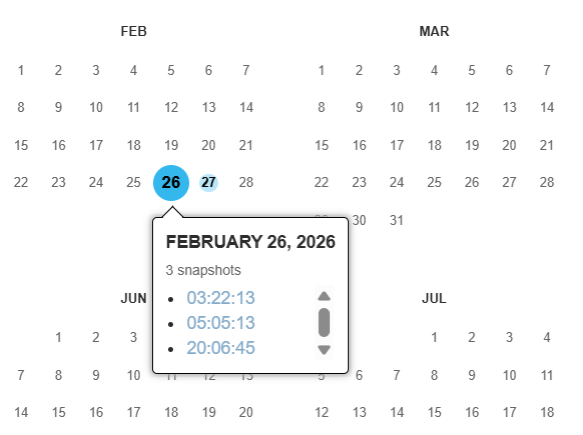

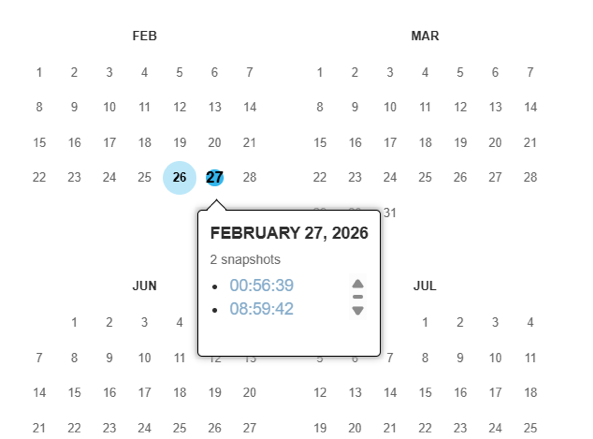

The webpage was observed as publicly accessible on 26 February 2026. Subsequent monitoring indicated that the page was later taken offline and then restored on 27 February 2026. Because web content can change or disappear without notice, the material was preserved using standard Open-Source Intelligence (OSINT) evidence-preservation practices, including capturing it with a forensic webpage capture tool and verifying against archived versions preserved by the Internet Archive Wayback Machine. Such captures preserve the state of a webpage at a specific time and support later verification of the content that was publicly available at the time of observation.

Independent archival records indicate that the Wayback Machine recorded multiple snapshots of the page during the relevant period. Archived instances were recorded on:

The existence of multiple archived captures confirms that the webpage content was publicly accessible during those periods and demonstrates that the material was subject to revision or temporary removal between captures. Preservation through both forensic capture and third-party web archiving enables investigators to establish a verifiable historical record of the webpage content, allowing later comparison with subsequent versions and supporting transparency in the investigative process.

Forensic Capture and Web Archiving

Forensic OSINT (Webpage Capture Tool) and Data Captures

OSINT techniques are frequently used to identify and collect publicly available information from websites and online platforms. Where such material may be relevant to an investigation, it is important that the information is captured in a manner that preserves evidential integrity. Web content is inherently volatile and can be modified, deleted, or replaced without notice. As a result, investigators should capture relevant webpages as soon as practicable after identification to preserve the state of the information at the time it was observed.

Forensic webpage capture tools, such as Forensic OSINT, are designed to preserve online material in a structured and reproducible manner. These tools typically record the full webpage content, associated resources, timestamps, and the source URL, often generating a verifiable file format and cryptographic hash values. This process demonstrates that the captured content has not been altered after collection and allows the material to be reviewed or reproduced later if required.

Capturing webpages in an evidential manner is particularly important where online content may later be removed or modified. Websites may change due to routine updates, deliberate alteration, or attempts to remove material following public exposure or investigative attention. Early capture, therefore, preserves a contemporaneous record of the information as it existed at a specific time and supports later comparison or verification against subsequent versions.

Proper preservation of OSINT data also supports transparency and repeatability within investigative processes. Recording acquisition details - including the date and time of capture, the method used, and any generated verification data - helps ensure that the collected material can be independently assessed and relied upon as part of an evidential record.

Verifiable Historical Record of the Webpage Content

Using Hyper Text Markup Language (HTML) Comparison as an Investigative Tool

HTML comparison provides a structured method for identifying whether webpages are related and for determining how a website has changed over time. By analysing differences in page source - including metadata, scripts, comments, structural elements, and linked resources - an investigator can identify indicators of shared development, reused templates, or common ownership that may not be visible through visual inspection alone.

The technique is particularly useful in repository revision analysis, where archived or previously captured versions of a webpage are compared against current versions to identify specific changes. Such changes may include altered text, modified links, replaced scripts, or the removal of material relevant to an investigation. Comparing historical captures enables investigators to reconstruct earlier states of a website and identify when substantive changes occurred.

HTML comparison also assists in dataset contraction. Rather than examining entire site collections, the process isolates the elements that differ between versions or recur across related sites. This allows the investigative dataset to be reduced to the artefacts most likely to have evidential significance, improving analytical clarity and supporting defensible reporting.

The revision implemented the following substantive changes between the two different ‘$2.1 billion in financial activity linked to Jeffrey Epstein had been audited’ websites for:

Removal of three high-profile individuals previously listed with associated financial volumes.

Reclassification of those entries from implied financial flows to “NLP co-occurrence noise.”

Adjustment of Leslie Wexner’s financial total from $37.2M to $30.8M.

Modification of Suspicious Activity Report (SAR) flag status for Joichi Ito.

Introduction of explicit SAR methodology language referencing confidence thresholds and dataset sources.

Removal of approximately thirty top-level dataset entities from embedded JavaScript objects.

Elimination of Epstein Files Transparency Act (EFTA) references associated with removed individuals.

Reduction of ownership metadata attributes.

And more…

No changes were made to the page structure, styling, or layout. The revisions were content-layer and dataset-layer modifications rather than a technical redesign.

The absence of structural change, combined with substantive evidentiary alteration, is notable: visual authority remained consistent while underlying assertions shifted

Evidential Standards and Methodological Concerns

The reclassification of removed individuals as “NLP co-occurrence noise” suggests that earlier table entries may have been derived from automated extraction methods rather than from independently corroborated transaction records.

Automated natural language processing systems identify proximity patterns between named entities and numerical values. Without entity-resolution safeguards and confirmed transactional validation - such as bank-level reconciliation, documented SAR filings through agencies such as the Financial Crimes Enforcement Network (FinCEN), or independently verified financial instruments - such associations remain inferential.

Proximity does not equate to proof.

The Coalition of Cyber Investigators has articulated foundational principles relevant to this incident, including:

Independent reproducibility of findings.

Multi-source corroboration prior to publication.

Clear differentiation between analytical inference and verified fact.

Transparent articulation of confidence thresholds.

Explicit documentation of methodological limitations.

The addition of SAR methodology disclosure in the revised HTML suggests a post-publication clarification of evidentiary thresholds. Such transparency is essential; however, transparency introduced after dissemination does not fully mitigate the reputational and informational effects of publishing before verifying.

Misinformation and Disinformation Pathways

This case illustrates how both misinformation and disinformation can emerge from the same sequence of events.

Misinformation Pathway:

An investigative author publishes findings derived in part from automated extraction outputs. High-profile names appear with precise monetary figures, conveying forensic certainty. Subsequent review identifies artefactual associations. Revisions are implemented.

Disinformation Pathway:

Screenshots or archived versions of the earlier publication are circulated after corrections have been made. These materials are presented as enduring evidence, detached from subsequent clarifications.

The precision of numerical presentation - e.g., $64.7M or $17.6M - creates cognitive authority. Humans interpret decimal specificity as evidential strength. However, computational precision can coexist with methodological weakness.

Once initial exposure occurs, corrections seldom propagate at the same rate. The reputational effect persists irrespective of revision logs.

Professional Accountability and Forensic Expectations

Forensic accounting, by definition, is characterised by evidential sufficiency, audit trail validation, and conservative publication thresholds. Reports of significant financial implications ordinarily undergo a thorough layered review prior to public release.

Rapid post-publication revision, particularly involving the removal of named individuals and recalibration of financial totals, raises legitimate questions concerning:

Pre-publication validation processes.

Peer review or secondary audit mechanisms.

Documentation of extraction confidence thresholds.

Evidential criteria distinguishing artefact from verified transaction.

This observation does not presume malicious intent. It underscores structural vulnerability when investigative outputs outpace verification frameworks.

Broader Implications for OSINT and Public Trust

The discipline of open-source intelligence relies upon public trust. When high-profile allegations appear and are then materially revised within short intervals, two risks arise:

Diminished credibility of the individual author.

Erosion of confidence in OSINT methodologies more broadly.

In an environment where Artificial Intelligence (AI) assisted extraction tools are increasingly utilised, the distinction between automated analytical artefact and corroborated evidence must be unambiguous. Failure to maintain that distinction invites interpretative manipulation.

The subsequent publication of a narrative titled “The Verification Wall” suggests recognition of the evidential tension inherent in the initial release. Verification, however, must function as foundational infrastructure rather than retrospective reinforcement.

Conclusion

The February 2026 publication and revision sequence provides a contemporary case study in the necessity of rigorous verification, corroboration, and evidential accounting prior to dissemination.

Key lessons include:

Automated extraction outputs must be independently validated before attribution of financial implications.

Repository availability and dataset transparency should precede public claims.

Archival capture will preserve initial assertions regardless of later correction.

Precise numerical presentation does not substitute for evidential sufficiency.

Post-publication methodological clarification, while necessary, does not eliminate reputational impact.

Data is not inherently true. It is structured information requiring interpretation. Without disciplined verification, structured information can become a persuasive narrative. And persuasive narrative, when insufficiently corroborated, may contribute - knowingly or unknowingly - to the creation and distribution of misinformation or disinformation.

In investigative practice, particularly where allegations involve public figures and significant financial volumes, the threshold for publication must exceed that for computational discovery.

From an investigative perspective, despite the author's hard work, the credibility of the results is significantly weakened by the presence of versions containing incorrectly interpreted data and inaccurate attribution of transaction values to high-profile individuals, the very mention of whom is almost guaranteed to attract intense scrutiny.

Notwithstanding the subsequent revisions and corrections, the evidence points to a case in which important findings were published but were neither verified nor validated. In legal proceedings, this lack of verification could be a fatal flaw, leading to the dismissal of an entire case.

Verification is not an editorial enhancement; it is the foundation upon which investigative legitimacy rests.

Authored by:

The Coalition of Cyber Investigators, Paul Wright (United Kingdom) & Neal Ysart (Philippines).

©2026 The Coalition of Cyber Investigators. All rights reserved.

The Coalition of Cyber Investigators is a collaboration between

Paul Wright (United Kingdom) - Experienced Cybercrime, Intelligence (OSINT & HUMINT) and Digital Forensics Investigator;

Neal Ysart (Philippines) - Elite Investigator & Strategic Risk Advisor, Ex-Big 4 Forensic Leader; and

Lajos Antal (Hungary) - Highly experienced expert in cyberforensics, investigations, and cybercrime.

The Coalition unites leading experts to deliver cutting-edge research, OSINT, Investigations, & Cybercrime Advisory Services worldwide.

Our co-founders, Paul Wright and Neal Ysart, offer over 80 years of combined professional experience. Their careers span law enforcement, cyber investigations, open source intelligence, risk management, and strategic risk advisory roles across multiple continents.

They have been instrumental in setting formative legal precedents and stated cases in cybercrime investigations and contributing to the development of globally accepted guidance and standards for handling digital evidence.

Their leadership and expertise form the foundation of the Coalition’s commitment to excellence and ethical practice.

Alongside them, Lajos Antal, a founding member of our Boiler Room Investment Fraud Practice, brings deep expertise in cybercrime investigations, digital forensics, and cyber response, further strengthening our team’s capabilities and reach.

The Coalition of Cyber Investigators, with decades of hands-on experience in cyber investigations and OSINT, is uniquely positioned to support organisations facing complex or high-risk investigations. Our team’s expertise is not just theoretical - it’s built on years of real-world investigations, a deep understanding of the dynamic nature of digital intelligence, and a commitment to the highest evidential standards.